MSI Accelerates Enterprise AI Strategy with Cloud-to-Edge Ecosystem at COMPUTEX 2026

Scaling Industrial AI from Liquid-Cooled Data Centers to Autonomous Edge Operations

MSI, a global leader in high-performance computing and industrial solutions, returns to COMPUTEX 2026 (Booth #J0605a) to unveil its strategic AI roadmap. This year’s showcase centers on a seamless continuum from data center scale to autonomous edge execution, featuring liquid-cooled AI platforms and supercomputers built on NVIDIA MGX, NVIDIA DGX Station, and NVIDIA DGX Spark architectures.

Cloud Foundation: Liquid-Cooled Infrastructure for Hyperscale AI

To meet the demands of modern AI data centers, MSI is introducing high-density platforms that prioritize thermal efficiency and performance:

CG681-S6093 6U Liquid-Cooled AI Server (based on NVIDIA MGX)

Built on NVIDIA MGX architecture, this server supports dual AMD EPYC™ processors and up to eight NVIDIA RTX PRO 6000 Blackwell Server Edition Liquid Cooled GPUs. It delivers the compute density required for large-scale AI inference - with support for a wide range of agentic, physical AI, scientific computing, simulation, graphics, and video workloads.

High-Speed Connectivity:

The platform is equipped with NVIDIA ConnectX-8 SuperNICs, providing up to 8×400Gbps Ethernet connectivity for distributed AI environments.

Rack-Scale Scalability:

MSI’s liquid-cooled rack-scale architecture supports up to four CG681-S6093 GPU systems within a 48RU configuration. Networking is anchored by NVIDIA Spectrum-4 SN5600 Ethernet switches and SN2201 out-of-band switches for high-performance AI cluster connectivity.

Deskside Development: The Desktop AI Supercomputer

Bridging the gap between the data center and the developer's desk, MSI presents high-performance AI computing for local workflows:

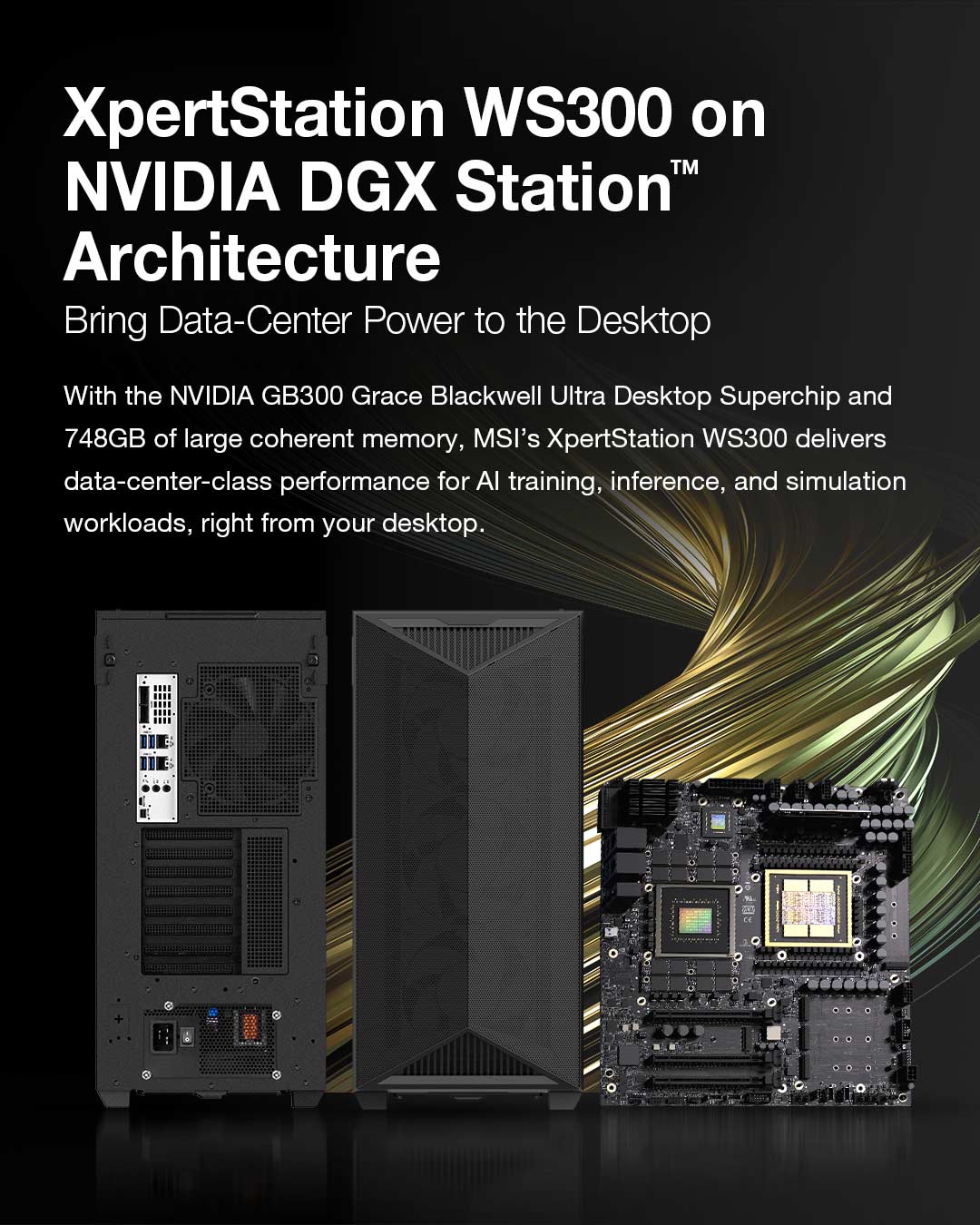

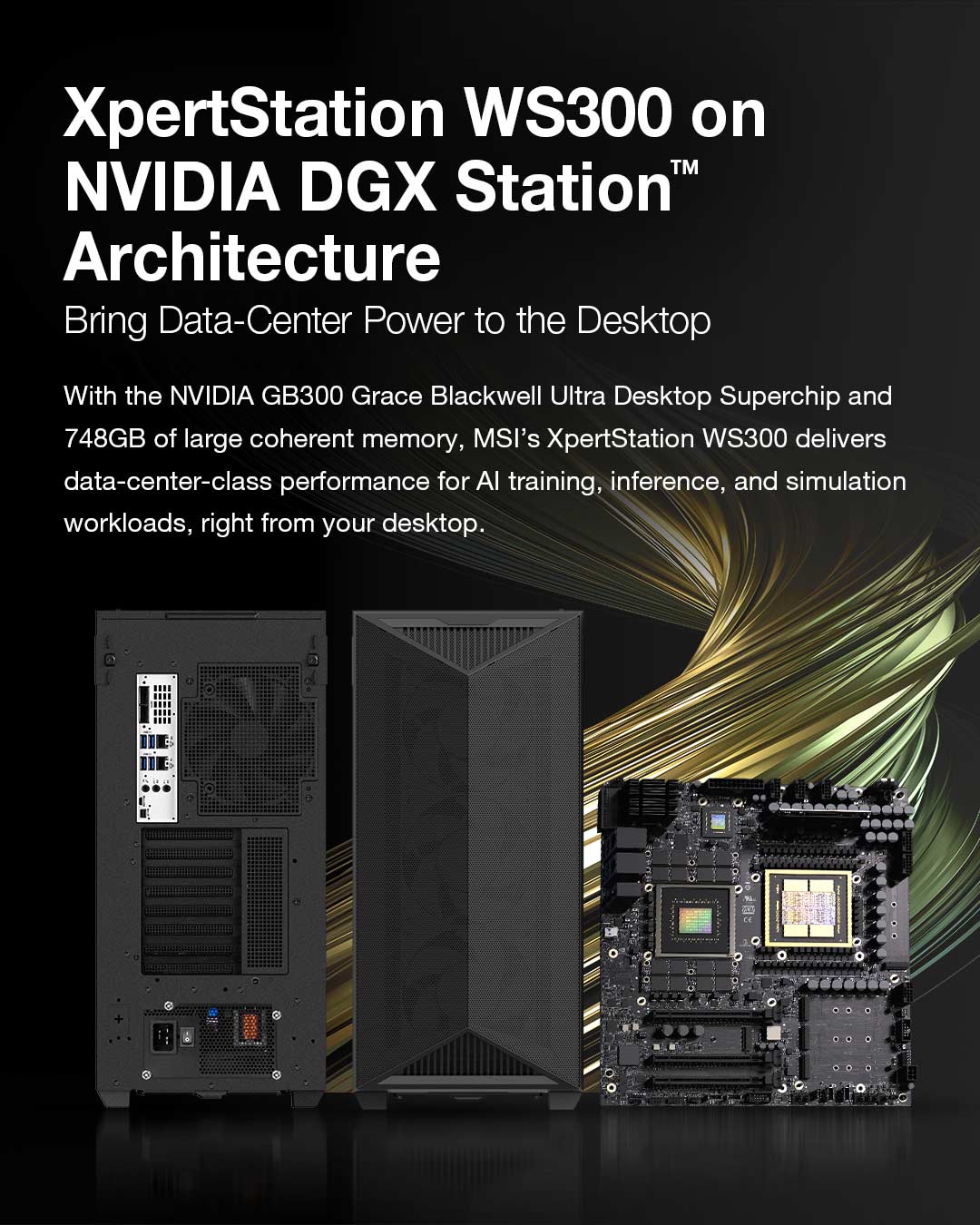

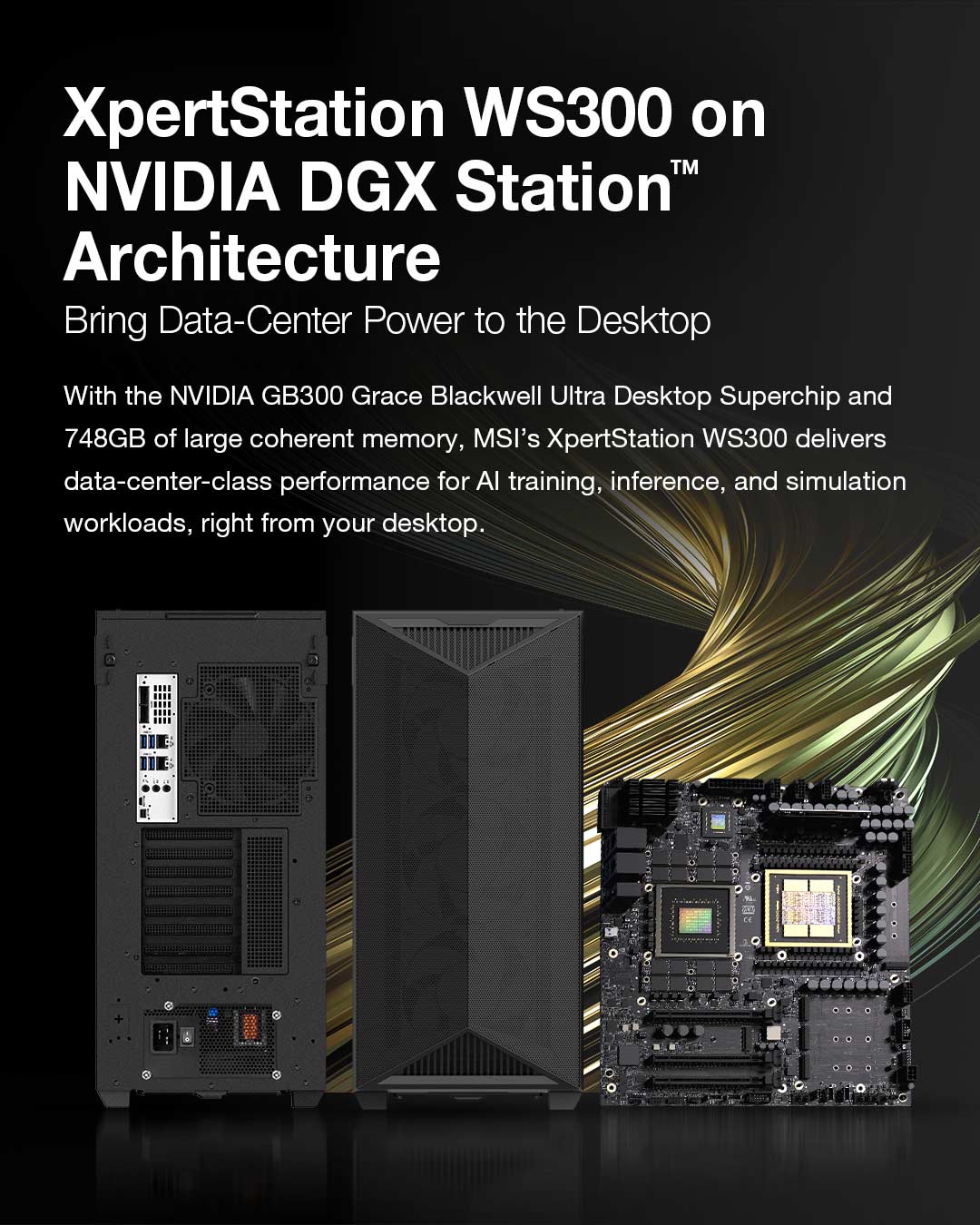

XpertStation WS300 (NVIDIA DGX Station):

Built on NVIDIA MGX architecture, this server supports dual AMD EPYC™ processors and up to eight NVIDIA RTX PRO 6000 Blackwell Server Edition Liquid Cooled GPUs. It delivers the compute density required for large-scale AI inference - with support for a wide range of agentic, physical AI, scientific computing, simulation, graphics, and video workloads.

Efficient AI Refinement:

The WS300 is designed for AI model development, fine-tuning, and inference, utilizing a compact, liquid-cooled design with dual 400GbE networking powered by NVIDIA ConnectX-8 SuperNICs to sustain peak performance. (Recently showcased at GTC Taipei).

Edge Execution: EdgeXpert and Autonomous Intelligence

MSI is bringing data center-level performance directly to real-world environments through the debut of its edge supercomputing platform:

EdgeXpert AI Supercomputer:

Built on the NVIDIA DGX Spark platform, EdgeXpert enables enterprises to deploy smarter, faster, and more scalable AI agents and applications at the edge.

OpenClaw/Hermes Agent on MSI EdgeXpert:

MSI showcases prominent AI Agent frameworks, providing open-source Agentic AI structures that support sustainable local operations and self-optimization capabilities.

EU CRA Compliant Agentic AI with Galene Elettra:

Powered by a Multi-Agent System (MAS), this solution enables intelligent decision-making across complex workflows while maintaining compliance with the European Cyber Resilience Act (CRA).

Legal AI Suite:

A specialized platform for enterprise AI, streamlining legal research, document analysis, and IP governance.

Smart Campus Patrol:

The Smart Campus Patrol Vehicle demonstrates real-time computer vision for precision inspection and smarter industrial operations.

Tarot AI Experience with Reachy Mini:

An interactive demonstration of Agentic AI, blending robotics with generative AI to deliver personalized engagement.

End-to-End AI in Action Across Industries

MSI delivers purpose-built Edge AI solutions developed with leading industry partners across diverse vertical markets:

Smart Manufacturing & Semiconductor:

The Edge AI Box MS-C910E with Memorence AI enables real-time machine vision. Partnering with Qiming Tech, MSI leverages the Edge AI Box MS-C939 to deliver real-time, high-precision automated optical inspection (AOI) to optimize semiconductor production yield.

Voice AI & Driver Safety:

Powered by Ubestream, the Slim Box MS-C926 provides real-time translation, while the Embedded Box MS-C927 enables instant voice-to-order experiences for retail. For driver safety, the In-vehicle Box MS-C932 runs real-time AI Driver Fatigue Detection to enhance on-road security.

Smart Transportation & Precision Agriculture

Integrating edge inference with frontline mobility and field operations, MSI optimizes commercial transit and smart farming workflows:

Smart Transportation × AI Vision Solutions:

MSI is expanding its smart transportation portfolio with mobility solutions powered by Edge AI and in-vehicle vision. The lineup features fleet management tablets, telematics boxes, smart rearview mirrors, and AI-enabled ADAS and DMS systems for fleet monitoring and video analytics. Utilizing real-time AI processing, MSI helps logistics and fleet operators improve management efficiency and strengthen road safety.

Smart Agriculture & Drone Integration:

MSI introduces an intelligent agricultural solution that seamlessly integrates autonomous drone technology, Edge AI computing, and ground control systems. Built for harsh environments, this comprehensive platform combines drone ground control stations, rugged tablets, T-Box connectivity modules, and centralized multi-drone management platforms. It is engineered for critical use cases including automated field inspection, precision spraying, crop monitoring, and pest/disease identification. By leveraging real-time video analytics, edge inference, and cloud data synchronization, the solution empowers agricultural operators to boost efficiency, streamline operational workflows, and accelerate the transition to smart farming.

Extreme Field Mobility (MS-NE21):

The NE21 Rugged Industrial Tablet features an Intel® 13th Gen Core™ i Series (Raptor Lake-U) processor, supporting up to 32GB LPDDR5/LPDDR5X memory and 2TB PCIe SSD. Built to MIL-STD-810G standards with an IP65 rating, it survives 4-foot drops and functions from -10°C to 50°C. It offers a 650-nit sunlight-readable 11.6" display with glove/wet-touch modes and a continuous hot-swappable battery system (64Wh to 98.1Wh).

Industrial Panel PCs & Robust Hardware Foundation

To support heavy enterprise workloads, MSI highlights its high-reliability industrial hardware portfolio:

Industrial Panel PCs:

Features include the MS-1A81 (21.5") for Smart Healthcare clinical workflows; the MS-1A22 (12.1") and MS-1A32 (15") for factory floor monitoring; and the MS-1A91 (10.1") and MS-OP01 (15.6") for secure Smart Locker Systems.

Hardware Backbone:

For multi-industry infrastructure durability, MSI showcases its robust hardware portfolio featuring the Intel Wildcat Lake, NXP, and NVIDIA® Jetson™ Thor™ series platforms, alongside industrial 4U rackmount systems.

Sustainability & EV Charging Infrastructure

Bringing intelligent infrastructure to the energy transition, MSI presents its smart EV charging solutions:

Eco Series Home EV Charger:

Awarded the Taiwan Excellence Award, this residential smart charger delivers up to 22kW three-phase output. Powered by NXP industrial MCUs and AI smart control, it integrates with solar storage. Featuring a UL94-V0 fire-rated enclosure (extinguishing over-heat sources in 10 seconds) and RDC-DD leakage detection for underground parking safety, it holds global safety certifications, RPC certification in Taiwan, and $5M USD product liability insurance.

MSI Hyper 80 Dual Fast Charger:

Designed for urban commercial hubs, this DC fast charger delivers 80kW power distribution within an industry-leading 30cm ultra-slim chassis to maximize space efficiency.

Visit MSI at COMPUTEX 2026, Booth #J0605a, Hall 1 (1F), to experience the future of the cloud-to-edge AI ecosystem.

MSI: https://www.msi.comMSI YouTube: https://www.youtube.com/user/MSIGamingGlobalMSI Facebook: https://www.facebook.com/MSIGamingMSI Instagram: https://www.instagram.com/MSIGamingMSI X: https://x.com/msigaming